I have data collected in a HCP-like style with pairs of functional runs collected with opposite encoding direction. They are of equal length (102 volumes), e.g. sub-OA01/func/sub-OA01_task-control_acq-MB_dir-AP_run-01_bold.nii.gz & sub-OA01/func/sub-OA01_task-control_acq-MB_dir-PA_run-02_bold.nii.gz. I am unclear on how to modify the json files so that PEPOLAR distortion will run in fmriprep. I came across this post: Fmriprep test data with fieldmap, but I’m not sure how to adapt it.

Since fmriprep.workflows.fieldmap.pepolar.init_pepolar_unwarp_wf is looking for a epi should I do the following?

- extract the first 10 or so volumes from the AP and PA bold runs, save these as something like sub-OA01/fmap/sub-OA01_acq-control_dir-AP_epi.nii.gz

- modify the json to read “IntendedFor”: “func/sub-OA01/func/sub-OA01_task-control_acq-MB_dir-PA_run-02_bold.nii.gz”

- Similar to 2, but for the bold AP run have a PA epi

I should also mention that there are issues with banding in the preprocessed files (no unwarping). These images were recorded with a multiband factor of 4. I’m wondering if the artifacts could be due to head motion interacting with the multiband factor. We ruled out slice timing correction and I’m not sure what else could be causing it. Is this a known issue and/or are there a better method for processing multiband data?

Could you share the FMRIPREP HTML report (with figures which are in separate folder) as well as corresponding MRIQC HTML report?

Hi @bryjack0890,

Yes, you can’t use two BOLD series of opposite encoding directions together directly to perform pepolar SDC. Instead, you need to provide _epi.nii.gz files that indicate how you want to use each image with respect to the other. Your suggestions seem like they’re going in the right direction, but just to be clear, I would suggest the following:

Assuming you have two images

sub-OA01/func/sub-OA01_task-control_acq-MB_dir-PA_run-01_bold.nii.gzsub-OA01/func/sub-OA01_task-control_acq-MB_dir-AP_run-01_bold.nii.gz

You could copy them, respectively, to:

sub-OA01/fmap/sub-OA01_task-control_acq-MB_dir-PA_run-01_epi.nii.gzsub-OA01/fmap/sub-OA01_task-control_acq-MB_dir-AP_run-01_epi.nii.gz

If you want to truncate these to 1 or several volumes, you should. I don’t have particular notions of the best number. fMRIPrep will combine however many volumes you have into a single volume, so if you want to perform your own motion-correction and averaging, you can do that.

These should also have .json sidecar files. Please see Section 8.3.5.4 of the BIDS specification for the necessary metadata. The IntendedFor field should point to the opposite-encoded BOLD image.

Hope this helps.

2 Likes

Thank you. It does. I was toying around with this idea as well. Good hear that’s the “right” solution.

I wasn’t sure how to upload the folder here so I’ve included a link to a .zip in my google drive containing both fmriprep and mciqu outputs for the first two subjects. Some runs have an incorrect phase encoding set and you can ignore those (that issue was solved below). The banding has been replicated several times using slightly different parameters, but is consistently there. Again, I think it may be due to motion but am open to suggestions.

https://drive.google.com/file/d/149T4w1b0RecmB7t01ag9oF0hTuStKHg_/view?usp=sharing

Which participant/task/run do you see the “banding” in? Could you elaborate a bit more which plot do you see the banding in?

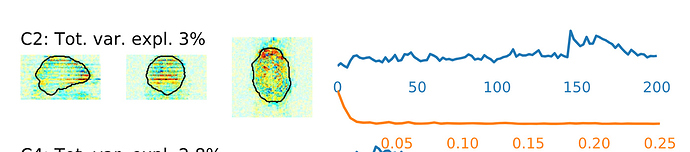

Both subjects posted above show the banding. It’s made most obvious in these reports by looking in the ICA component sections of any of the functional (task, learn, or rest).

Yes, that’s what I’m seeing in all participants I’ve pre-processed. You’ll notice ica-aroma picks some of these components as signal

The plot I posted was from MRIQC. This is an interaction of motion, spin history effects, and multi band. ICA AROMA would be a good way to clean this up.