Hi Taylor!

I have recently worked on something which could partially answer your question.

It might not be exactly what you are looking for, as I did not use a Shared Response Model (SRM), but another functional alignment method based on optimal transport (which I would advocate is methodologically close to hyperalignment). Moreover, the dataset is not strictly speaking movie-watching, but more comparable to “clip-watching” (i.e. watching long sequences of 10-second video clips).

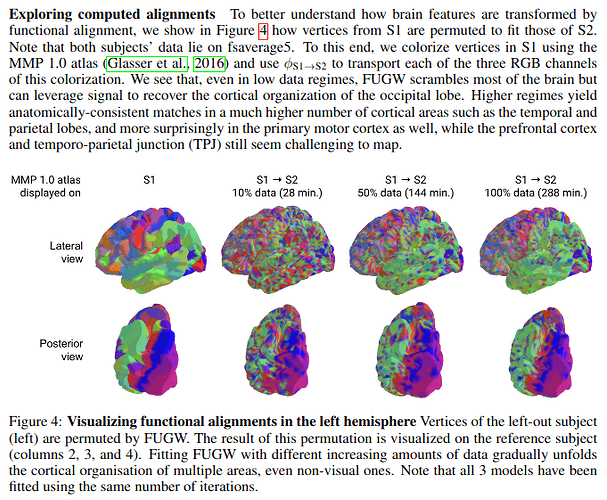

In short, it seems that if you rely solely on functional data, 5 minutes is probably not enough to derive alignments which are anatomically relevant (i.e. some voxels are going to be matched together even though they are very distant from one another on the cortex). However, with more data (about two hours), there is already enough signal that you can align some functional areas not only in the occipital lobe, but also in the parietal and temporal lobes. Nevertheless, is seems that alignments which are not anatomically relevant could still be useful depending on the downstream task.

Note that I was computing alignments between pairs of subjects, so not leveraging information from the entire group of subjects (which you could in part do with an SRM, although I am not sure it would work).

You can find more in this pre-print: [2312.06467] Aligning brain functions boosts the decoding of visual semantics in novel subjects

For context, I was using functional alignment to study how it can help to transfer brain decoders trained in one individual to another individual. Maybe Figure 4 is what is most relevant to you: