Hi all,

I’m a physicist from the Donders Institute at the Radboud University in the Netherlands. I’m involved in setting up MR acquisition protocols, but mostly do my work on processing the data that comes out. To that end, I developed a very user friendly (it comes with a #gui ;-)) python package named BIDScoin for converting that data into #bids.

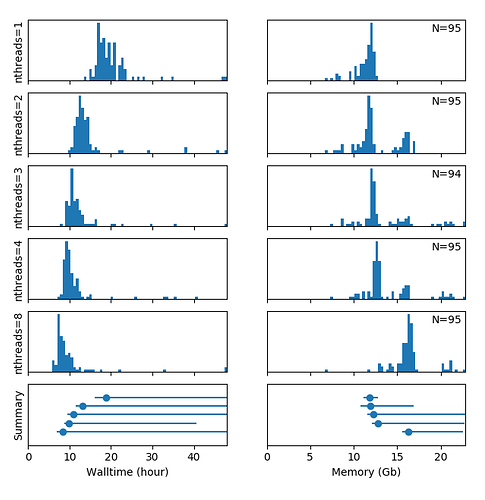

I also support a lot of the #fmriprep processing in the center and made a little tool to process bids dataset on our #hpc cluster (using #singularity containers). I actually just finished doing a fmriprep v20.2.1 #multi-threading #benchmark on our cluster, which I figured may interest some of you (I hope) and which is the reason why I post this here. Here is what I found:

Conclusions:

- The speed increase is not very significant when increasing the number of compute threads above 3

- The majority of jobs of the same batch require a comparable walltime, but a few jobs take much longer. NB: The spread of the distributions is increased by the heterogeneity of the DCCN compute cluster and occasional inconsistencies (repeats) in the data acquisition in certain (heavily moving) participants

- The #memory usage of the majority of all jobs is comparable and largely independent of the number of threads, but distinct higher peaks in the distribution appear with an increasing number of threads

All the details and code to distribute the BIDS job on the HPC cluster (fmriprep_sub.py), as well as the data (i.e. PBS logfiles) and code to generate the benchmark plot (hpc_resource_usage.py), can be downloaded from github.

Would love to get your ideas and/or comments on the irregular memory usage of my multi-threaded jobs!

Sorry for the long intro post

Marcel